8 cost-free top tips every website owner should be following

Last updated on August 24th, 2024Whether you have a blog, business card or e-commerce website, getting the basics right can make sure your site is secure, functional and search engines friendly. This ensures you get a natural flow of traffic, with minimal effort and cost.

Whilst this article is focused on cost-free security and search engine optimisation tips, it would be remiss of me not to mention the importance of a reliable web host. If your site is unreliable or slow, it will impact your search engine ranking and visitor retention. If your web host is letting you down, consider reading my genuine review of Hostinger Premium Web Hosting that BeachyUK.com is hosted with currently.

Here are eight simple cost-free tips that will help you make sure your website is getting the basics right.

1. Setup and test your SSL/TLS certificate (use HTTPS)

Security is finally not just something for us techies to talk about. Website certificates are a must-have. Without one, your site will be labelled “Not secure” by web browsers, and search engines will reduce your ranking, making it less likely you’ll get traffic.

This tip doesn’t have to cost you anything either! SSL certificate providers such as LetsEncrypt.org, Sectigo (formly known as Comodo) and CloudFlare (if you’re using their core (free) service) offer website certificates for free.

I recommend Cloudflare, if you’re already using it. Otherwise I would look at your web hosting providers control panel. Many web hosting providers integrate with LetsEncrypt automatically, making the process quick and easy to signup and maintain. If your web host doesn’t have a free certificate option in their control panel, use sslforfree.com for a guided walk through the LetsEncrypt signup process.

Force website traffic to use HTTPS

Once you’ve applied a certificate to your website, don’t forget to force all traffic to the HTTPS address. This ensures every connection is secure, reassuring search engines and users alike. You can often do this from your web hosting providers control panel, or Cloudflare if you use it. Alternatively you can add the following to your .htaccess file.

# Redirects to HTTPS WWW version of site - first condition forces WWW sub-domain, second condition forces HTTPS

<IfModule mod_rewrite.c>

RewriteEngine On

RewriteCond %{HTTP_HOST} !^www\.

RewriteRule ^(.*)$ https://www.%{HTTP_HOST}/$1 [R=301,L]

RewriteCond %{HTTPS} off

RewriteRule ^(.*)$ https://%{HTTP_HOST}/$1 [R=301,L]

</IfModule>If you don’t use the WWW sub-domain for all content, simply add the below instead.

# Redirects to HTTPS version of site

<IfModule mod_rewrite.c>

RewriteEngine On

RewriteCond %{HTTPS} off

RewriteRule ^(.*)$ https://%{HTTP_HOST}/$1 [R=301,L]

</IfModule>Test your SSL/TLS regularly

TLS certificates should be tested regularly to check they are valid, and don’t support weak protocols and ciphers. I recommend using the SSL Labs free tool for this: https://www.ssllabs.com/ssltest/. SSL Labs is provided by Qualys, a company with a strong reputation at security scanning.

The SSL Labs checks are thorough so take a couple of minutes to complete. If you just what a quick check that your certificate is working, you can use https://www.sslchecker.com/sslchecker for a more simple result.

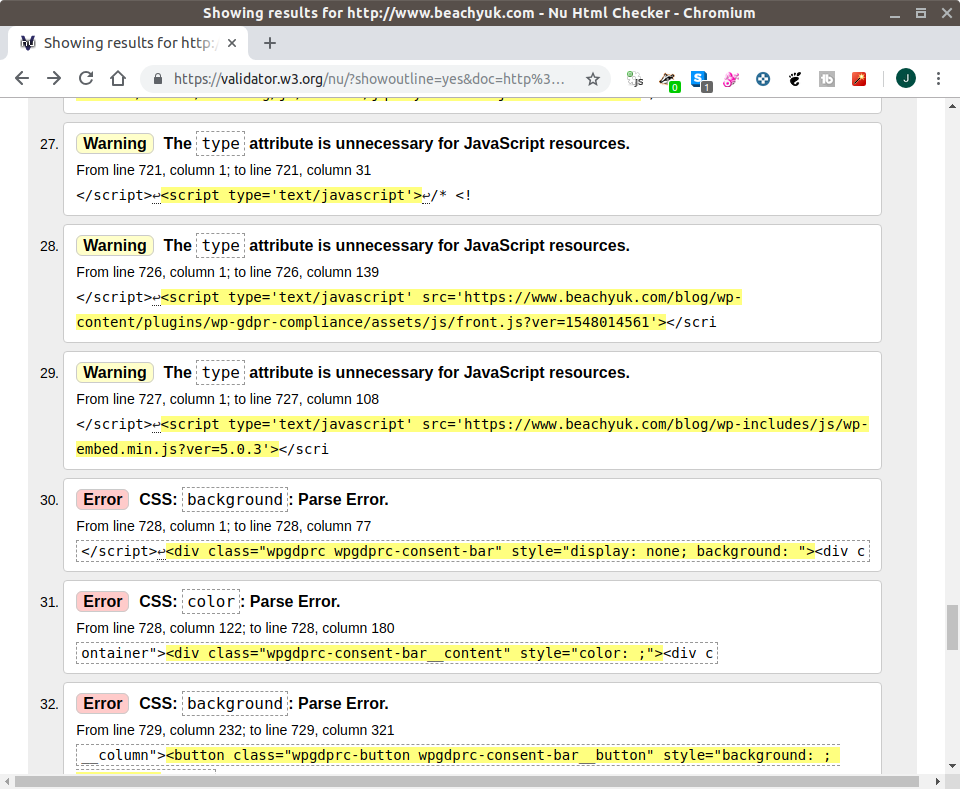

2. Check your code with validator.w3.org

When creating your site, you probably used a template, or code snippets you found online. You may even have reused code you’ve used on other sites. HTML/XML standards evolve though, so your code needs to be consistent to one standard.

In reality, many sites are a mix of different HTML/XML standards, or are missing elements. This results in them being inconsistent or inaccessible in some browsers, screen readers, and search engine bots.

Validator.w3.org is an online tool that will crawl a page you point it at, and compare it to the standards. It will recommend changes as a result. I choose w3.org, as the site is run by the community behind the web standards, so they know what they’re talking about!

This validator ALWAYS finds things I’ve forgotten to include in my coding. These tiny changes can make all the difference with search engines in particular (e.g. content type, language etc.), as they need extra detail to understand your site content. As search engines look to rate the quality of the site for search rankings, they see poor coding as a poor quality site. It’s therefore well worth looking at such validators.

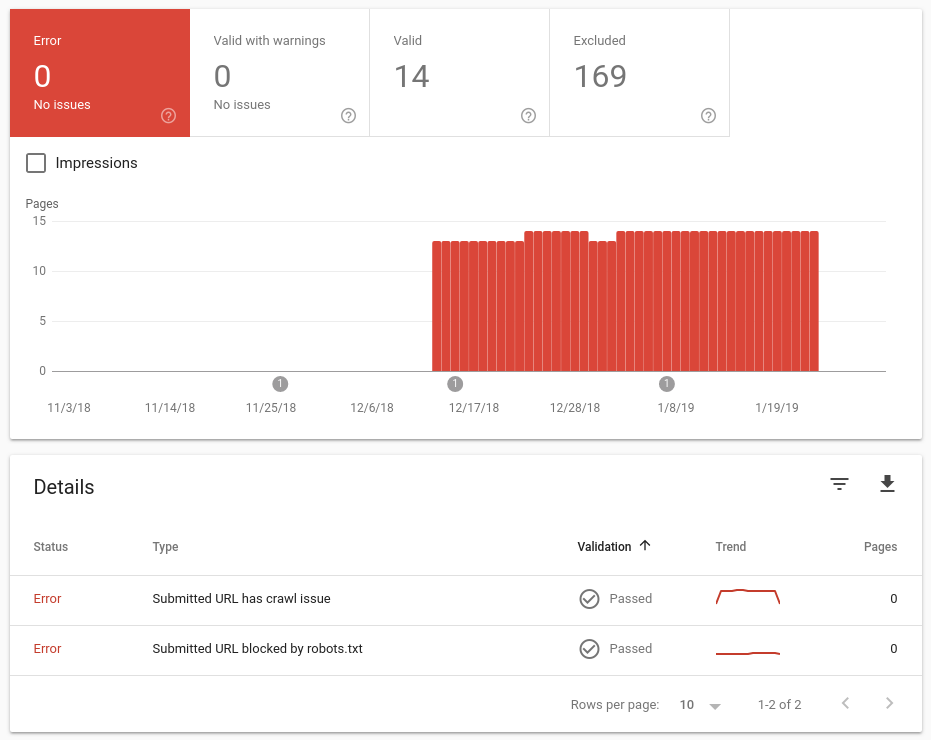

3. Use search engine webmaster tools

There’s no point in creating your website if nobody is visiting it!

Several search engines have “webmaster tool” portals. These enable the owner of a site to see and influence how the search engine sees their site.

At the time of writing, the biggest search engines in the world, in order of size are as below. These four search engines account for over 98.5% of web searches.

- Google

Google Search Console provides insight and tools to measure your sites performance in Google. This includes impressions and clicks, view issues, and what pages are indexed. - Baidu (Huge in China)

Never heard of Baidu? Baidu is often referred to as “the Google of China”, handling around 70% of searches from China. Google handles around 2%.

Given the size of China, if your site maybe of interest to people there, you should make sure your site is listed with Baidu.

Unfortunately it appears you can currently only signup for their Webmaster Tools if you have a Chinese mobile phone number. - Bing

Bing Webmaster Tools provides more functionality than Google’s Search Console, though isn’t quite as nice to use in my opinion.

They provide the basics, but also tools for search engine optimisation around your code quality and mobile optimisation. You can even tell Bing the times of day to crawl your website, to avoid busy times on your server. Personally, I just use it for the core metrics and error information. - Yahoo!

Yahoo! uses Bing data, and therefore does not have a separate portal for webmasters.

With each of the search engines above, I recommend you search to make sure your site appears in their results. Also use the webmaster tools (exclude Baidu if you’re unable to sign-up) to check for any “issues” they are having with your site. I have found issues before with duplicate content due to Google seeing multiple URL’s on WordPress for the same content. You can also, in the case of Google, see pages that have been discovered by Google, but that it has decided not to index. Sadly it won’t tell you the reason, though if they’re important pages, it gives you something to investigate before it impacts your traffic too much.

4. Create and submit a sitemap

A sitemap is simply a list of all pages on your site that you’d like a search engine to be aware of. It also includes the frequency recommended for search engines to revisit the page, and a relative priority of each page within your site (relative to each other).

If you have a template based website (e.g. WordPress, Joomla etc..), there are plugins out there that will automatically maintain an sitemap for you (I recommend Google XML Sitemaps for WordPress sites).

If your website is custom coded, or plugins don’t give what you need, I would strongly recommend writing a script to create the sitemap for you. This will save you having to maintain it manually with every page addition.

Once created, log into search engine webmaster tools as above and submit the sitemap URL’s. This will make sure the search engine is aware of the pages. Note it does not guarantee that they will be indexed.

5. Try your site in different browsers and devices

You probably use your own device(s) to check changes to your website on a “common setup”. Whilst this is logical, each setup (device type, and browser in particular) can interpret sites slightly differently, and may have a significant impact on your visitors.

For example, when I recently installed a new SSL certificate on one of my sites, it looked fine in my normal browser, but when I opened it in Firefox as a test… it threw an “insecure” error. Firefox was not happy with the new certificate.

You can’t check everything, but I recommend you:

- Install at least one extra top-3 browser on your PC for testing

The latest desktop browser split at the time of writing is below. I recommend having a couple of the top three browsers installed, for quick testing in two common browsers.

Chrome 70.19%

Edge: 8.07%

Firefox: 7.58%

Internet Explorer: 4.53%

Safari: 3.56%

Source: NetMarketShare.com June 2020 Browser Market Share Report (Desktop/Laptop) - Check your site on your phone

Over 52% of website traffic is from smartphones. So if you’re only testing on a PC, you’re not actually experiencing your site the same as your average user is. Pick up your smartphone and check your site looks good, and works well on a phone. You can also shrink the size of your PC browser window, or use Developer Tools in your browser to replicate different sizes of display. I try to replicate smartphone, tablet and PC window sizes. - Use Browser Compatibility test sites

There are some sites out there that can help. http://browsershots.org for example, will open a URL in a ton of different operating system and browser combinations. The thumbnails it creates makes it easy to see any major differences, and click through for a more detailed view. Personally I just use this on significant pages. I ignore any issues from obscure or outdated browsers, as this site tests some stupidly old browsers. - Ask friends, family, and community members to help out

Asking others to quickly take a look will probably get you a whole host of feedback along with the basics. This way you’ll naturally get a mix of browsers and operating systems tested (even better if you ask them to try their phone, tablet and PC/laptop). You should get some useful feedback about any site content and usability too.

Don’t just use friends and family though, if you’re active on any forums you may be able to ask for some willing volunteers there. Check the forum rules though as you don’t want to be seen to be spamming the forum.

6. Check web accessibility, SEO and Social Media rankings

There are simply loads of “good bots” out there, that you can point at your website to review the content. I recommend https://nibbler.silktide.com though for a general website review, that leans towards Search Engine Optimisation (SEO). This is different to step 2, where we looked purely at code standards. Nibbler looks at social media setup, activity, inbound links, “freshness” of content, as well as good practices like compression.

It’s free, so give it a go and see how your site scores, and more importantly, where you can improve things.

7. Get a domain healthcheck

There are a wide range of skills needed to run a website. There are few people who can honestly say they’ve mastered them all.

When it comes to the core connectivity of DNS, I trust MXToolBox. They provide a lot of free website owner tools. A great place to start for site owners is their Domain Health Check and their DNS Check. These check everything from core nameserver setup (DNS Check), to the setup of relevant SPF and DMARC records on your domain, for email spam protection purposes (Domain Health Check).

There is a specific SPF record checker at https://spf-checker.org/.

8. Backup!

It’s the job we love putting off, but it really is essential. If you haven’t backed up since your last edit/post, you’re running a risk you probably don’t want to be.

Don’t forget to backup databases as well as files.

Conclusion

This is just a short list of priorities items. Are there any items you feel I should have added though? If so, let me know in the comments.

All the best with your websites.